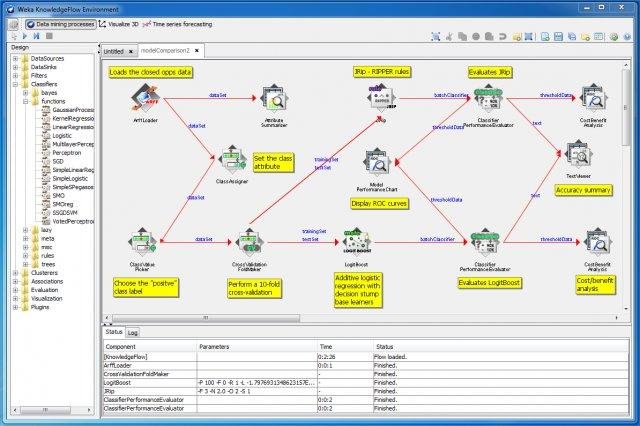

M /trunk/weka/src/main/java/weka/gui/EnvironmentField.java M /trunk/weka/src/main/java/weka/gui/explorer/PreprocessPanel.javaįixed NPEs that occur on startup of GUIs when using the Nimbus look and feel M /trunk/weka/src/main/java/weka/gui/experiment/SimpleSetupPanel.java M /trunk/weka/src/main/java/weka/gui/experiment/SetupPanel.java M /trunk/weka/src/main/java/weka/gui/experiment/SetupModePanel.java M /trunk/weka/src/main/java/weka/gui/beans/BeanVisual.java M /trunk/weka/src/main/java/weka/gui/PropertySheetPanel.java Support for the consquence of a rule is now explicitly stored in ItemSet in order to prevent numerical problems stemming from reconstructing this from confidence, total support and lift when constructing the list of AssociationRule objects. M /trunk/weka/src/main/java/weka/associations/ItemSet.java M /trunk/weka/src/main/java/weka/associations/AprioriItemSet.java M /trunk/weka/src/main/java/weka/associations/Apriori.java User can specify how many pixels the chart is shifted by every time a point is plotted Now checks that points aren't set to missing value before plotting. M /trunk/weka/src/main/java/weka/gui/beans/StripChartBeanInfo.java M /trunk/weka/src/main/java/weka/gui/beans/StripChart.java R11998 | mhall | 14:04:54 +1200 (Fri, ) | 1 lineĬhanged version number props file back to 3.7.13 for mvn release

M /trunk/weka/src/main/java/weka/core/version.txt

Weka/classifiers/functions/MLPModel$1.java Weka/classifiers/functions/MLPClassifier.java Weka/classifiers/functions/loss/SquaredError.java

Weka/classifiers/functions/loss/LossFunction.java Weka/classifiers/functions/loss/ApproximateAbsoluteError.java Weka/classifiers/functions/activation/Softplus.java Weka/classifiers/functions/activation/Sigmoid.java Weka/classifiers/functions/activation/ApproximateSigmoid.java Weka/classifiers/functions/activation/ActivationFunction.java All network parameters are initialised with small normally distributed random values. MLPRegressor also rescales the target attribute (i.e., "class") using standardisation. Input attributes are standardised to zero mean and unit variance. Logistic functions are used as the activation functions for all units apart from the output unit in MLPRegressor, which employs the identity function. but optionally conjugated gradient descent is available, which can be faster for problems with many parameters. Both classes use BFGS optimisation by default to find parameters that correspond to a local minimum of the error function. The sum of squared weights is multiplied by this parameter before added to the squared error. The size of the penalty can be determined by the user by modifying the "ridge" parameter to control overfitting.

Both minimise a penalised squared error with a quadratic penalty on the (non-bias) weights, i.e., they implement "weight decay", where this penalised error is averaged over all training instances. The former has as many output units as there are classes, the latter only one output unit. MLPClassifier can be used for classification problems and MLPRegressor is the corresponding class for numeric prediction tasks. MultiLayerPerceptrons This package currently contains classes for training multilayer perceptrons with one hidden layer, where the number of hidden units is user specified.

#Weka jar download download

JMaven nz.ac. multiLayerPerceptrons Download multiLayerPerceptrons nz.ac. :

0 kommentar(er)

0 kommentar(er)